Indice

Algorithm Engineering A.A. 2022-2023

Teacher: Paolo Ferragina

Teacher: Paolo Ferragina

CFU: 9 (first semester).

Course ID: 531AA.

Language: English.

Question time: Monday: 15-17, or by appointment (also in video-conferencing through the virtual room of the course)

News: about this course will be distributed via a Telegram channel.

Official lectures schedule: The schedule and content of the lectures is available below and with the official registro.

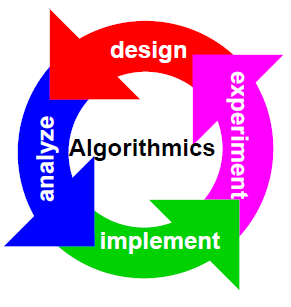

Goals

In this course we will study, design and analyze advanced algorithms and data structures for the efficient solution of combinatorial problems involving all basic data types, such as integers, strings, (geometric) points, trees and graphs. The design and analysis will involve several models of computation— such as RAM, 2-level memory, cache-oblivious, streaming— in order to take into account the architectural features and the memory hierarchy of modern PCs and the availability of Big Data upon which those algorithms could work on. We will add to such theoretical analysis several other engineering considerations spurring from the implementation of the proposed algorithms and from experiments published in the literature.

Every lecture will follow a problem-driven approach that starts from a real software-design problem, abstracts it in a combinatorial way (suitable for an algorithmic investigation), and then introduces algorithms aimed at minimizing the use of some computational resources like time, space, communication, I/O, energy, etc. Some of these solutions will be discussed also at an experimental level, in order to introduce proper engineering and tuning tools for algorithmic development.

Week Schedule of the Lectures

| Week Schedule | ||

|---|---|---|

| Day | Time Slot | Room |

| Monday | 9:00 - 11:00 | Room Fib C |

| Tuesday | 11:00 - 13:00 | Room Fib C |

| Wednesday | 11:00 - 13:00 | Room Fib C |

Exam

The exam will consist of a written test including two parts: exercises and “oral” questions. The exam is passed if in both parts the student gets a sufficient score (expressed in 30), which are then averaged.

The first (exercises) and the second (theory questions) parts of the exam can be split into different exam dates, even of different exam sessions. The exam dates are the ones indicated in the calendar on ESAMI. In the case that the second part is not passed or the student abandons the exam, (s)he can keep the rank of the first exam, but this may occur just once. The second time this happens, the rank of the first part is dropped, and the student has to do both parts again.

| Dates | Room | Text | Notes |

|---|---|---|---|

| 16/01/2023, start at 09:00 | room E | text, solution, results | Correction will occur Wednesday 18 January, at 15:00 (Ferragina's office). For registration only, also online same date-hour, please show yourself on Teams' room of the course. Students that have passed only the “exercises” part can repeat only the “theory” part on any of the following exam dates, they have to register on the portal “ESAMI” writing in the notes “only theory”. Moreover, they can come +45mins after the start of the exam, to join the class that did in the first hour the “exercises” part. |

| 10/02/2023, start at 09:00 | room E | text, results, solution | Correction will occur Monday 13 February, at 11:00 (Ferragina's office). For registration only, you can just send me an email stating that you accept the rank. Students that have passed only the “exercises” part can repeat only the “theory” part on any of the following exam dates, they have to register on the portal “ESAMI” writing in the notes “only theory”. Moreover, they can come +45mins after the start of the exam, to join the class that did in the first hour the “exercises” part. |

| 05/06/2023, start at 09:00 | room C | text, results | The “result” file reports the grades of the two-parts exam and a proposal for the final grade. You can accept it, writing me, or you can repeat one or both the parts. |

| 05/07/2023, start at 16:00 | room C | ||

| 24/07/2023, start at 09:00 | room C | text | |

| 07/09/2023, start at 16:00 | room A1 | text |

Background and Notes of the Course

I strongly suggest refreshing your knowledge about basic Algorithms and Data Structures by looking at the well-known book Introduction to Algorithms, Cormen-Leiserson-Rivest-Stein (third edition). Specifically, I suggest you look at the chapters 2, 3, 4, 6, 7, 8, 10, 11 (no perfect hash), 12 (no randomly built), 15 (no optimal BST), 18, 22 (no strongly connected components). Also, you could look at the Video Lectures by Erik Demaine and Charles Leiserson, specifically Lectures 1-7, 9-10, and 15-17.

Most of the content of the course will be covered by some notes I wrote in these years; for some topics, parts of papers/books will be used. You can download the latest version of these notes from this link. I state that this material will be published by Cambridge University Press as Pearls of Algorithm Engineering by me. This prepublication version is free to view and download for personal use only. Not for redistribution, resale or use in derivative works. © Paolo Ferragina 2022.

Lectures

Video-lectures of last year are available at the link and they are linked just for reference, if you wish to re-check something you listened in class. This year, lectures are in presence and the program of the course could be different.

The lectures below include also some EXTRA material, which is suggested to be read for students aiming to high rankings.

| Date | Lecture | Biblio |

|---|---|---|

| 19/09/2022 | Introduction to the course. Models of computation: RAM, 2-level memory. An example of algorithm analysis (time, space and I/Os): binary search. The B-tree (or B+-tree) data structure: searching and updating a big-set of sorted keys. | Chap. 1 of the notes. Read note 1 and note 2 on B-trees. |

| 20/09/2022 | Another example of I/Os-analysis: the sum of n numbers. The role of the Virtual Memory system. Algorithm for Permuting. Sorting atomic items: sorting vs permuting, comments on the time and I/O bounds. | Chap. 5 of the notes. |

| 21/09/2022 | Binary merge-sort, and its I/O-bounds. Snow Plow, with complexity proof and an example. Study also from my notes: Finding the maximum-sum subsequence (Chap. 2, no sect 2.5-). | Chap. 5 of the notes. |

| Students are warmly invited to refresh their know-how about: Divide-and-conquer technique for algorithm design and Master Theorem for solving recurrent relations; and Binary Search Trees | Lecture 2, 9 and 10 of Demaine-Leiserson's course at MIT | |

| 27/09/2022 | Multi-way mergesort: algorithm and I/O-analysis. Lower bound for sorting: comparisons and I/Os. EXTRA: Lower bound for permuting. | Chap. 5 of the notes |

| 28/09/2022 | The case of D>1 disks: non-optimality of multi-way MergeSort, the disk-striping technique. Quicksort: recap on best-case, worst-case. Quicksort: Average-case with analysis. | Chap. 5 of the notes |

| 03/10/2022 | Selection of kth ranked item in linear average time (with proof). 3-way partition for better in-memory quicksort. Bounded Quicksort. Multi-way Quicksort: definition, design and I/O-complexity. Selection of k-1 “good pivot” via Oversampling. Proof of the average time complexity of Multi-way Quicksort. | Chap. 5 of the notes |

| 04/10/2022 | Random sampling: disk model, known length (algorithms and proofs). Random sampling on the streaming model, known and unknown length. Reservoir sampling: Algorithm and proofs. Exercises on Random Sampling. | Chap. 3 of the notes. |

| 05/10/2022 | Randomized data structures: Treaps with their query (search a key, 3-sided range query) and update (insert, delete, split, merge) operations (with time complexity and proofs). | Notes by others. Study also Theorems and Lemmas. |

| 10/10/2022 | Randomized data structures: Skip lists (with proofs and comments on time-space complexity and I/Os). String sorting: comments on the difficulty of the problem on disk, lower bound. | See Demaine's lecture num. 12 on skip lists. Chap. 7 of the notes. |

| 11/10/2022 | LSD-radix sort with proof of time complexity and correctness. MSD-radix sort and the trie data structure. Multi-key Quicksort: algorithm and analysis. | |

| 12/10/2022 | Ternary search tree. Exercises. Fast set intersection, various solutions: scan, sorted merge, binary search, mutual partition, binary search with exponential jumps. A lower bound on the number of comparisons. | Chap. 6 of the notes, and Sect 9.2. |

| 17/10/2022 | Fast set intersection: more on Exponential jumps, and two-level scan. Interpolation Search. EXTRA: random shuffling (sect. 6.4). | See sect. 9.2 for interpolation search. |

| Students are warmly invited to refresh their know-how about: hash functions and their properties; hashing with chaining. | Lectures 7 of Demaine-Leiserson's course at MIT | |

| 18/10/2022 | Hashing and dictionary problem: direct addressing, simple hash functions, hashing with chaining. Uniform hashing and its computing/storage cost, universal hashing (definition and properties). An example of Universal Hash functions, with correctness proof. | Chap. 8 of the notes. All theorems with proof, except Theo 8.3 and 8.5 without proof (only the statement). |

| 19/10/2022 | Another example of Universal hashing: just the algorithm (no proof). The case of d-left hashing and the “power of two choices” result. Cuckoo hashing (with all proofs). | Don't read Perfect Hash (hence no sect. 8.5). |

| 24/10/2022 | Minimal ordered perfect hashing: definition, properties, construction, space and time complexity. Exercises. | |

| 25/10/2022 | Bloom Filter: properties, construction, query and insertion operations, error estimation (with proofs). Spectral Bloom Filter. EXTRA: Lower bound on BF space | No compressed BF. |

| 26/10/2022 | Prefix search: definition of the problem, solution based on arrays, Front-coding, two-level indexing based on compacted tries. Analysis of space, I/Os and time of all described solutions. | Chap. 9 of the notes: 9.1 and 9.4. No Locality Preserving front coding (9.3). |

| 31/10/2022 | Two-level indexing based on Patricia Trees, with exercises. | Chap. 9 of the notes: 9.5. Video |

| 02/11/2022 | Substring search: definition, properties, reduction to prefix search. The Suffix Array. Binary searching the Suffix Array: p log n. Suffix Array construction via qsort and its asymptotic analysis. Text mining use of suffix arrays. | Chap. 10 of the notes: 10.1, 10.2.1 (but no pages from 10-4 to 10-8), 10.2.3 (but no “The skew algorithm”, no “The Scan-based algorithm”), and sect 10.4.3. |

| 07/11/2022 | Prefix-free codes, the notion of entropy. Integer coding: the problem and some considerations. Shannon Theorem and optimal codes. The codes Gamma and Delta, space/time performance, and consideration on optimal distributions. | Chap. 11 of the notes. |

| 08/11/2022 | The codes Rice, PForDelta, Elias-Fano code. Definition of Rank/Select operations and comments. | |

| 09/11/2022 | Exercise on Elias-Fano and simplified NextGEQ procedure. Variable-byte code and (s,c)-dense code, with examples. | |

| 14/11/2022 | Lab activity (optional): A gentle introduction to the Succinct Data Structure Library: integer sequences. (Please, prepare in advance the environment for experimenting with the SDSL library, as indicated at the repo.) | slides SDSL and video |

| 15/11/2022 | Lab activity (optional): More on the Succinct Data Structure Library. | video. Solution of the exercise. |

| 16/11/2022 | Interpolative code. Rank and Select: definition and succinct solution for Rank. EXTRA: Solution for Select. | Chapter 15 of the notes. |

| 21/11/2022 | Compressed solution of Rank/Select based on Elias-Fano coding. Succinctly encoding binary trees, with examples. | No LOUDS which is for generic trees. |

| 22/11/2022 | Data Compression. Static, semistatic and dynamic models for frequency estimation. Huffman, with optimality (proof) and relation to Entropy | Chap. 12 of the notes. No PPM. |

| 23/11/2022 | Canonical Huffman: construction, properties. Huffman decompression. Arithmetic coding: properties, the converter tool. | |

| 28/11/2022 | More on Arithmetic coding: compression, decompression, and entropy bounds. | |

| 29/11/2022 | Dictionary-based compressors: properties and algorithmic structure. LZ77, LZSS, LZ78. | Chap 13.1 and 13.2. No LZW. Slides. |

| 30/11/2022 | Compressor bzip: MTF, RLE0, Wheeler-code, Burrows-Wheeler Transform. How to construct the BWT via qsort. How to invert the BWT. | Notes: 14.1, 14.2 and 14.3. |

| 05/12/2022 | Exercises | |

| 06/12/2022 | Exercises | |

| 07/12/2022 | Exercises | |

| 12/12/2022 | Exercises | |

| 13/12/2022 | Exercises |